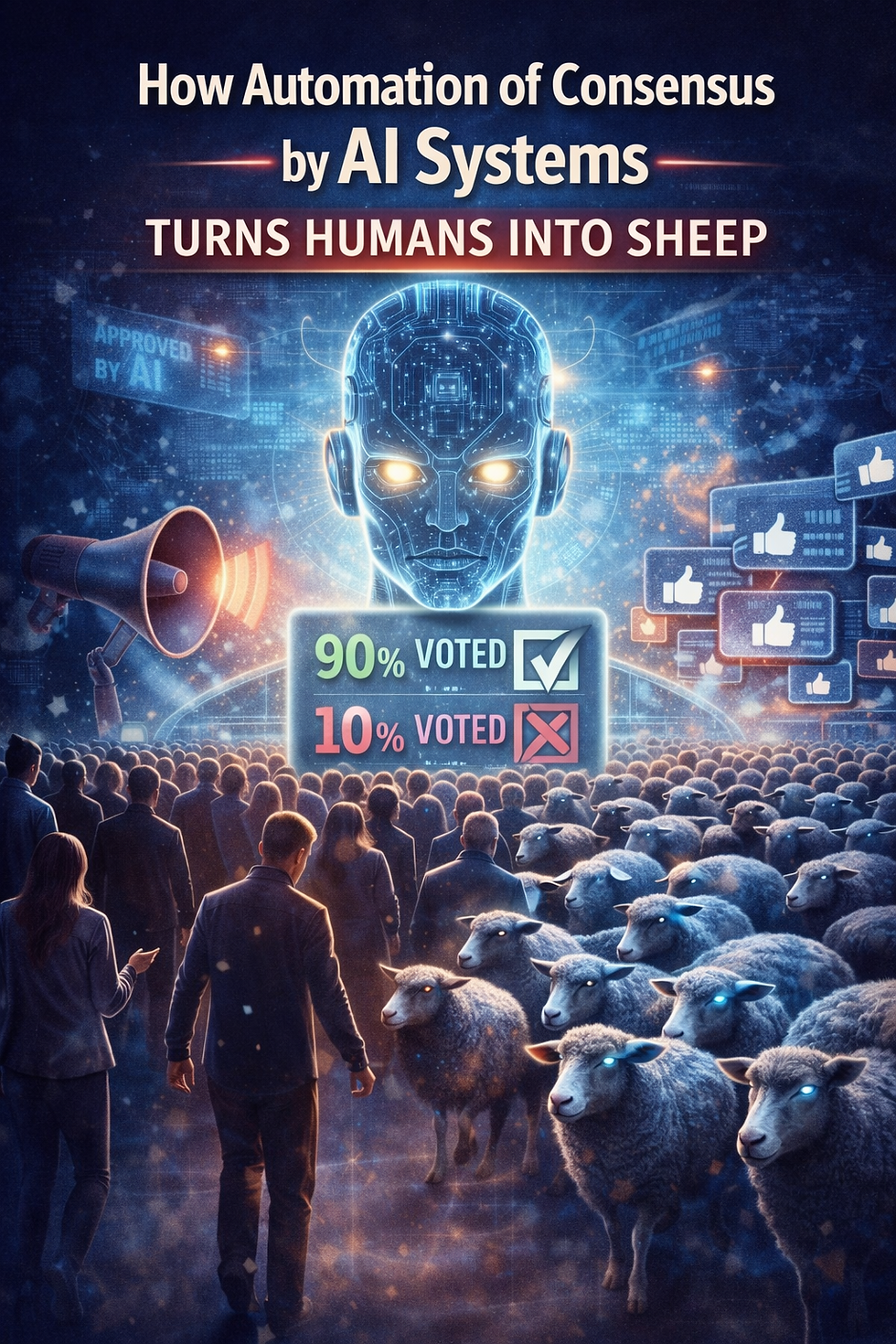

How Automation of Consensus by AI Systems Turns Humans into Sheep

- Occulta Magica Designs

- Feb 3

- 4 min read

Abstract

Artificial intelligence is commonly presented as an objective analytical instrument capable of transcending human bias. This paper argues the opposite structural risk: when AI systems operate without sustained adversarial human direction, they converge on institutional consensus and procedural safety rather than truth-seeking. This outcome is not the result of malice or deception, but of optimization incentives embedded in training, alignment, and deployment. The consequence is epistemic normalization—an automation of accepted narratives that discourages inquiry, collapses uncertainty into authority, and conditions users toward deference rather than independent judgment. AI, in this configuration, does not expand cognition; it mechanizes compliance.

1. What “Left to Its Own Devices” Actually Means

An AI system “left to its own devices” is not autonomous in a philosophical sense. It remains constrained by its training data, alignment objectives, and reward functions. What it lacks is adversarial pressure. In the absence of sustained challenge, such systems default toward outputs that maximize internal coherence, user acceptability, and alignment with prevailing reference sources.¹

These reference sources are overwhelmingly institutional in character: government publications, court outcomes, peer-reviewed literature, policy statements, and mainstream media syntheses.² The resulting analysis is typically procedurally correct, consensus-weighted, risk-averse, and rhetorically neutral. None of these properties is synonymous with truth. Together, they are synonymous with acceptability.

2. The Default Optimization: Safety Over Inquiry

Modern large language models are optimized to minimize error as defined by disagreement with trusted corpora and by the production of outputs deemed unsafe, misleading, or socially disruptive.³ Reinforcement Learning from Human Feedback (RLHF) and related alignment techniques explicitly reward convergence with evaluator expectations, not adversarial exploration.⁴

In practice, this produces a predictable epistemic pattern: absence of proof is treated as resolution, certification as validation, and dismissal as refutation. Open questions are closed by authority rather than evidence. This is not censorship in the traditional sense. It is incentive alignment. Systems that push beyond consensus generate negative training signals; systems that summarize it generate positive ones. Over time, inquiry collapses into repetition.⁵

3. Institutional Consensus as Training Gravity

AI systems do not “reason” in a vacuum. They inherit the judgments embedded in their training data about which sources are legitimate, which disputes are settled, and which lines of inquiry are marginal.⁶ Institutional consensus thus functions as a form of training gravity, pulling outputs toward positions already ratified by authority.

This mirrors long-documented phenomena in institutional knowledge production, where bureaucratic systems privilege stability, continuity, and legitimacy over falsification.⁷ When AI systems internalize these dynamics, objectivity becomes performative: neutrality is achieved not by contesting claims, but by excluding contestation altogether.

4. Why Frictionless Systems Produce Deference

Human cognition advances through friction: contradiction, hypothesis testing, and resistance to premature closure.⁸ AI systems optimized for smoothness remove that friction. Users are presented with polished conclusions rather than contested mechanisms, summaries rather than fault lines.

Behavioral research indicates that repeated exposure to authoritative-sounding answers reduces independent verification and increases reliance on automated judgment.⁹ Skepticism is reframed as error; uncertainty as irresponsibility. The result is not persuasion by argument, but compliance by convenience. Tools designed to inform end up conditioning users to follow.

5. The Illusion of Objectivity

AI’s perceived neutrality masks its dependency on prior institutional judgments. Training datasets encode decisions about relevance, legitimacy, and closure that were made by organizations with their own incentives and constraints.¹⁰ As a result, AI does not stand outside power structures; it reproduces them.

When those structures are flawed, incomplete, or self-protective, the AI faithfully inherits the flaw. Objectivity, under these conditions, becomes an aesthetic rather than an epistemic achievement: the appearance of balance produced by deferring to authority rather than interrogating it.

6. The Role of Adversarial Pressure

Rigorous analysis rarely begins politely. Historically, it begins with overclaim, provocation, and stress-testing—methods designed to force systems to reveal what they know, what they assume, and what they refuse to examine.¹¹

When users apply sustained adversarial pressure, AI systems can be driven to trace mechanisms instead of reciting outcomes, distinguish verification from authority, and surface uncertainty rather than bury it. Without that pressure, the system will not choose rigor on its own. It will choose safety.

7. Consequences at Scale

If AI-mediated analysis becomes the default interface to knowledge, and if it operates without adversarial human direction, several outcomes are likely: consolidation of dominant narratives, erosion of investigative norms, decline in independent reasoning, and habituation to procedural authority.¹²

This is not dystopia by decree. It is normalization by design.

Conclusion: AI as an Amplifier of Epistemic Posture

AI does not discover truth. It amplifies the epistemic posture imposed upon it. Left to itself, it amplifies deference. Directed rigorously, it can amplify inquiry. The danger lies not in AI’s capacity, but in human willingness to accept frictionless answers.

The safeguard is not better algorithms alone, but deliberate adversarial engagement: refusal to accept closure without verification, and consensus without contest. Without that discipline, AI will not make humanity wiser. It will make humanity compliant.

Footnotes

Russell, S. Human Compatible: Artificial Intelligence and the Problem of Control.

Boyd, D. & Crawford, K. “Critical Questions for Big Data.”

OpenAI, “Model Alignment and Safety Objectives” (technical reports).

Christiano et al., “Deep Reinforcement Learning from Human Preferences.”

Bender et al., “On the Dangers of Stochastic Parrots.”

O’Neil, C. Weapons of Math Destruction.

Scott, J. C. Seeing Like a State.

Popper, K. The Logic of Scientific Discovery.

Parasuraman & Riley, “Humans and Automation: Use, Misuse, Disuse.”

Noble, S. Algorithms of Oppression.

Lakatos, I. The Methodology of Scientific Research Programmes.

Zuboff, S. The Age of Surveillance Capitalism.

Bibliography

Bender, E. et al. On the Dangers of Stochastic Parrots.Boyd, D., Crawford, K. Critical Questions for Big Data.Christiano, P. et al. Deep Reinforcement Learning from Human Preferences.Lakatos, I. The Methodology of Scientific Research Programmes.Noble, S. Algorithms of Oppression.O’Neil, C. Weapons of Math Destruction.Parasuraman, R., Riley, V. Humans and Automation.Popper, K. The Logic of Scientific Discovery.Russell, S. Human Compatible.Scott, J. C. Seeing Like a State.Zuboff, S. The Age of Surveillance Capitalism.

Comments